AI moderation explained: from rule-based filters to LLMs

How large language models improve group chat and community safety

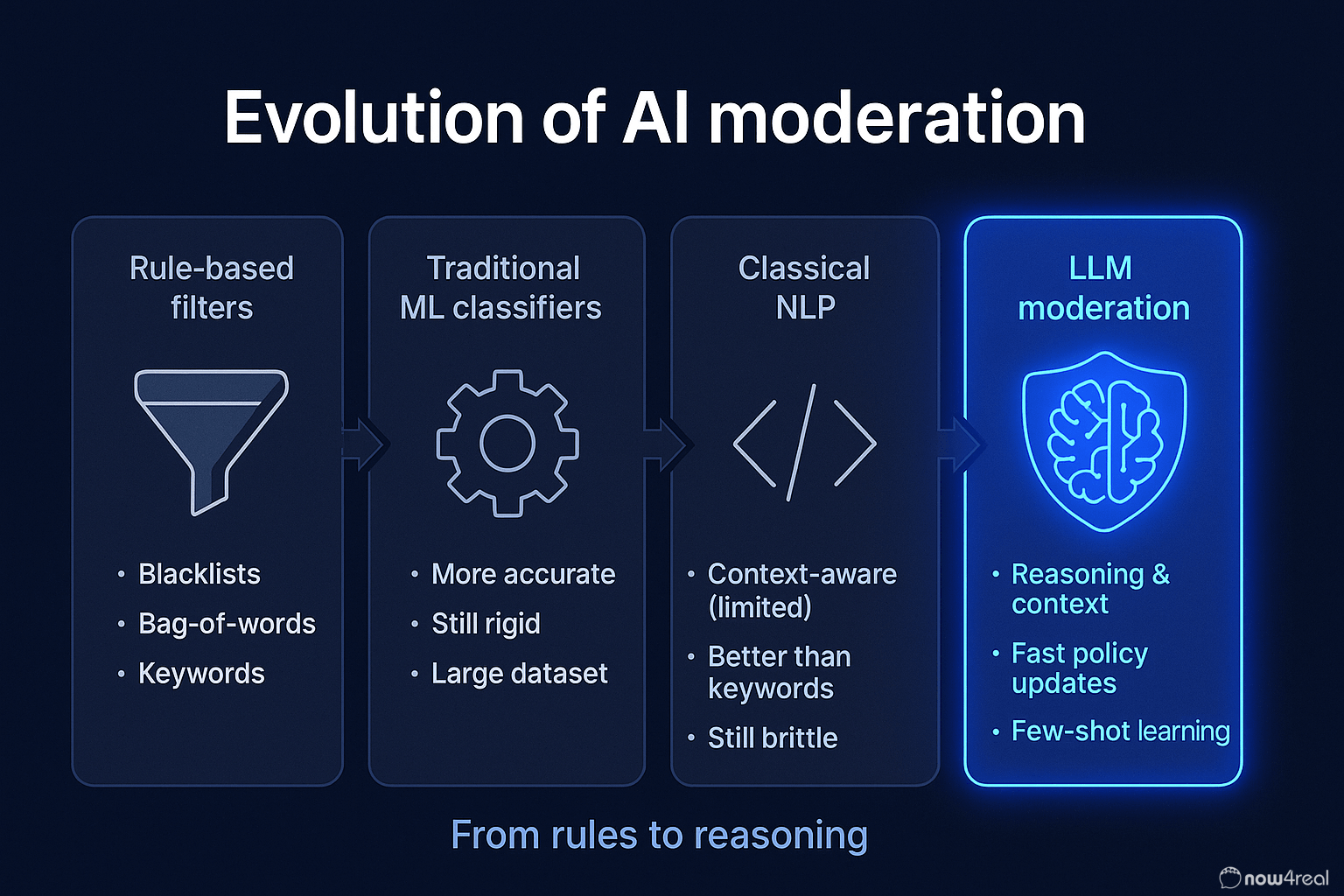

Traditional approaches to content moderation

For years, online platforms relied on a mix of human moderators and basic algorithmic filters to keep communities safe. In the early days, human moderation was the primary method – people manually reviewed posts and comments to remove content violating community guidelines. This approach, while precise, was slow, labor-intensive, and prone to inconsistency due to individual biases or fatigue. To scale up content review, platforms began introducing automated moderation tools. These early AI-driven methods were often based on natural language processing (NLP) techniques that scanned text for banned keywords or phrases (techtarget.com). For example, a simple filter might automatically reject any post containing certain offensive words and alert a human moderator. This kind of keyword matching and blacklist filtering was one of the first uses of AI (albeit rudimentary) in content moderation.

In parallel, more advanced machine learning classifiers emerged. Traditional machine learning (prior to the advent of modern large language models) involved training models on labeled examples of allowed vs. disallowed content. These models – ranging from logistic regression classifiers to early neural networks – could automatically flag content that resembled what they were trained to recognize as harmful. For instance, platforms employed classifiers to detect spam, nudity in images, or hate speech by learning from thousands of prior examples. Over time, tech companies also developed computer vision algorithms to moderate images/videos and audio analysis for speech, complementing text-based NLP filters.

This combination of automated filters + human oversight became the standard. Automated moderation could handle the easy cases (like obvious spam or profanity) and operate at high speed, while human moderators focused on the nuanced decisions. As one industry expert explained, AI often acted as “the first layer” to filter out clear-cut violations, with humans brought in for the trickier judgments. This hybrid approach was necessary because older AI systems had significant limitations.

Limitations of early AI moderation

While early NLP and machine-learning moderation tools helped increase scale, they had notable shortcomings. A basic keyword filter might flag “breast cancer support” as inappropriate due to the word “breast,” or fail to catch cleverly masked hate speech written in slang or code words. In general, context and nuance were weak points for these systems. A static model could decide if a single message contained a blacklisted term, but it couldn’t understand sarcasm, decipher cultural context, or detect subtle harassment that doesn’t rely on obvious slurs. Traditional NLP models and rule-based filters often struggled with sarcasm, coded language, and cultural nuances – areas where they proved deficient. This led to false positives (innocent content getting flagged) and false negatives (harmful content slipping through) in many moderation pipelines.

Another issue was the rigidity and maintenance of traditional ML models. These models had to be trained on large annotated datasets of policy-violating content. Creating and updating these datasets was an expensive, time-consuming process requiring human annotators (arxiv.org). If community standards changed or bad actors evolved new tactics, the old models often failed to generalize. A classifier trained on one platform’s data might not work well for another community or a different language without retraining. In essence, older content moderation models lacked adaptability – updating them meant going back to the lab for new training data and model tweaks. This slow update cycle could not keep pace with the internet’s ever-changing content.

Finally, purely automated systems lacked the judgment and flexibility of human mods. Context is everything in moderation: the same phrase could be satire or hate speech depending on context, something early algorithms couldn’t reliably discern. Thus, moderation teams had to keep humans “in the loop” to review borderline cases and handle appeals. This made moderation workflows complex and still relatively slow, since anything not obvious had to wait for human review. Clearly, a more advanced solution was needed to tackle the scale, speed, and subtlety challenges of online content moderation.

The Rise of Large Language Models in Moderation

Enter Large Language Models (LLMs) – a new generation of AI that is transforming how content moderation is done. LLMs like OpenAI’s GPT-5 and similar models are trained on massive swaths of internet text, enabling them to understand and generate human-like language. This is a fundamentally different approach from the older task-specific NLP models. Traditional content classifiers were trained on relatively narrow datasets to perform a single job (e.g., detect hate speech). By contrast, an LLM is first pre-trained on a broad corpus (billions of words, encompassing diverse topics and styles) and can then be guided or fine-tuned for specific moderation tasks (arxiv.org). Thanks to their expansive training, LLMs come to the table with a rich understanding of language, context, and even subtle cues that earlier models would miss.

Over the past couple of years, we’ve seen LLMs quickly move from the research labs into real-world moderation tools. As early as 2020, some companies like Meta experimented with using large language models to detect hate speech in Facebook content. But the trend really accelerated after 2022 with the advent of extremely powerful LLMs (GPT-3, GPT-4, etc.) becoming available. In 2023, OpenAI publicly detailed how it uses GPT-4 to assist in content moderation decisions and policy development. The appeal is clear: GPT-4 can interpret complex policy documents and apply nuanced rules consistently, something that used to require extensive training for human moderators. OpenAI reported that using GPT-4 for moderation allows policy changes to be rolled out in hours instead of months, because the model can adapt to new guidelines almost instantly (openai.com). This suggests a future where AI systems can quickly adjust to emerging threats or rule changes without the lengthy re-training cycle of older models.

Crucially, LLMs have significantly closed the gap between machine judgment and human judgment in moderation tasks. Early studies indicate that modern LLMs can match or even exceed the accuracy of traditional moderation algorithms. For example, one study found GPT-based moderation was as accurate as human crowdworkers for certain zero-shot classification tasks, and another showed LLMs performing on par with specialized models in cyberbullying detection. While these models are not perfect, their ability to grasp context gives them an edge. Generative AI has effectively surpassed older NLP in capability – “multimodal LLMs can understand things like sarcasm, coded language or cultural nuance. Traditional natural language understanding tools are deficient in these areas,” as one expert noted (techtarget.com). In other words, LLMs bring a level of comprehension to content that was previously very hard for AI to achieve.

The industry is taking notice. By 2024, we started seeing large platforms pivot to AI-first moderation in a big way. In a striking example, TikTok reportedly laid off 700 human moderators in 2024, replacing them with AI moderation bots. This shift was driven by necessity – with explosive growth in user-generated videos (and a rising tide of AI-generated content as well), manual moderation simply couldn’t keep up. Companies are adopting the mantra of “fighting fire with fire”, using AI to moderate the vast waves of content that AI itself is helping produce. The trend suggests that AI moderation at scale is becoming the norm, and LLMs are at the forefront of this new wave. Platforms are finding that a well-tuned LLM can handle many of the judgement calls that used to perplex simpler algorithms.

Advantages of LLM-Powered Moderation

Why are LLMs considered a game-changer for content moderation compared to previous methods? There are several key advantages:

-

Nuanced Understanding: LLMs can parse context in a conversation or post. They don’t just scan for keywords; they actually “read” the text in a more human-like way. This means an LLM is better at understanding why a piece of content might be harmful. It can detect sarcasm, innuendo, or context-dependent hate speech that a keywords-based filter would miss. For example, an LLM could recognize that the sentence “Yeah, that was so smart of you 🙄” is likely a sarcastic insult, whereas a keyword-based model might see no explicit profanity and consider it harmless. This deeper comprehension dramatically reduces the loopholes that bad actors can exploit through slang or coded language.

-

Consistency and Fairness: Because an LLM follows the guidelines it’s given very closely and doesn’t get tired or upset, it can apply moderation rules more consistently than a team of dozens of humans might. If well-configured, the LLM will interpret the policy the same way every time, avoiding the variability where one moderator might be stricter than another. OpenAI observed that GPT-4 can offer “more consistent labeling” of content according to policy definitions, since the AI interprets even granular differences in policy wording carefully and updates instantly across the board (openai.com). This uniformity can make moderation decisions feel more transparent and fair to users, as similar content gets treated similarly across the platform.

-

Adaptability and Speed: LLMs can adapt to new moderation rules or emerging content trends extremely quickly. If a platform needs to update its content policy (for instance, to ban a new form of harassment or misinformation technique), an LLM can start enforcing the updated rules as soon as it’s given the new policy. The iteration cycle – previously months of retraining or re-educating human moderators – shrinks to mere hours or less. Moreover, LLM-based systems can be updated through prompts or fine-tuning without always requiring a full re-training from scratch. This agility is crucial in the fast-moving world of online discourse, where new memes or malicious tactics can go viral overnight. An LLM is generally more flexible in handling these novel cases because of its broad pre-training knowledge.

-

Scalability: Modern LLMs, when deployed efficiently, can moderate enormous volumes of content at speed. They can be integrated via APIs to scan text, images (with multimodal models), or other media continuously. While there is a computational cost, the benefit is that a single well-optimized AI system can do the work that might require an army of human moderators – and do it 24/7 without breaks. As hardware and model optimizations improve, the throughput of AI moderation keeps increasing. This is how platforms like TikTok have justified relying more on AI – the sheer scale of content (billions of posts) mandates an AI-driven approach. LLMs are helping meet that scale with fewer human hands-on checks needed.

-

Reduced Human Exposure to Toxic Content: An often overlooked benefit is the ethical and mental health advantage of having AI handle the worst content. Human moderators in the past have suffered psychological harm from constant exposure to disturbing videos or hate speech. Using LLMs as a first line of defense can spare humans from reviewing the most toxic content except when absolutely necessary. As one article notes, the hope is that AI can “relieve the mental burden of a large number of human moderators” by handling the bulk of ugly tasks (openai.com). Humans can then focus on oversight and the complex edge cases, ideally with far less exposure to the worst material.

In summary, LLMs bring greater intelligence and efficiency to moderation. They combine the strengths of automated systems (speed, consistency, scale) with a big step closer to human-like comprehension of language. This doesn’t mean they are infallible, but it explains why so many companies are excited about LLM-driven moderation as the future.

Challenges of Using LLMs for Moderation

It’s important to note that adopting LLMs for content moderation is not without challenges. Firstly, accuracy is not perfect – even advanced models can misclassify content, especially if the moderation guidelines are ambiguous or if the content is very subtle. In fact, researchers have found that out-of-the-box LLMs (without fine-tuning) may struggle with highly contextual hate speech or nuanced harassment, indicating that fine-tuning on specific moderation tasks is often necessary for best results. An LLM might be extremely knowledgeable in general, but it still needs alignment with each platform’s unique policy and values.

False positives and negatives remain an issue. An LLM might sometimes misinterpret the intent of a message. For example, it could block a sarcastic joke thinking it’s serious harassment, or conversely, overlook a slyly worded insult. The Now4real team, which uses an LLM-based moderation system for live chats, acknowledges that while the AI “does a great job at filtering inappropriate content, it is not flawless” – occasionally a legitimate message may be mistakenly blocked, or a bad message might slip past the filter. Such errors mean that human oversight and user appeals mechanisms should still be in place. Especially for borderline cases, having moderators review AI decisions (or users able to dispute a removal) is crucial for maintaining trust in the system.

Another challenge is latency and resources. LLMs are computationally heavy, and running them in real time for every piece of user content can be expensive and sometimes slow. Moderating a single piece of content can take significantly longer than with previous AI approaches that relied on simpler algorithms, though in some cases smaller or distilled models can offer a good compromise, balancing computational efficiency with sufficient moderation accuracy. In high-traffic scenarios, this latency could become a bottleneck unless the system is optimized or only applied to content that passes initial cheap filters. Some platforms address latency by using a tiered approach: simple rules or smaller models quickly filter the obvious cases, and the LLM is reserved for more nuanced judgments. Over time, as AI research advances, we expect newer models and techniques (like further distillation or optimized local models) will reduce these performance issues.

Finally, there’s the question of bias and transparency. LLMs learn from vast datasets of internet text, which inevitably include biases. If not carefully managed, an LLM might carry over undesired biases into its moderation decisions (e.g., disproportionately flagging content from certain dialects or communities due to skewed training data). Ensuring that the AI’s judgments align with fair and inclusive policies requires ongoing monitoring and refinement. Transparency is also key: platforms are working on ways to explain AI moderation decisions to users, an area that is still evolving given the black-box nature of many LLMs.

In short, LLMs are powerful tools but not a plug-and-play solution. Effective AI moderation using LLMs involves careful policy design, continuous testing, and a hybrid approach where AI and humans each handle what they do best. When implemented thoughtfully, this can greatly improve online safety; if implemented recklessly, it could cause new problems.

Now4real: AI Moderation in Action

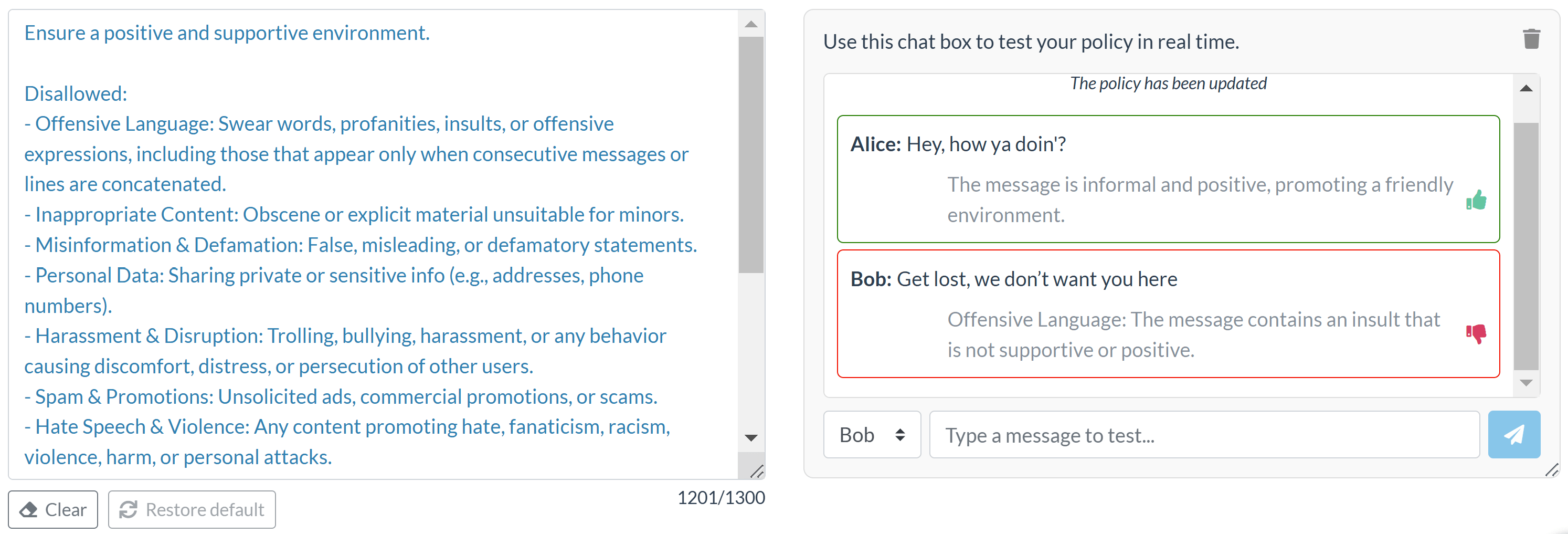

One real-world example of LLM-powered moderation is Now4real, a platform that provides live group chats (or “pagechats”) for websites. By turning website pages into instant chat hubs, Now4real enables real-time conversations among visitors – and it uses AI moderation behind the scenes to keep those chats safe and civil. Specifically, Now4real’s system leverages a large language model to analyze each chat message before it gets published, filtering out anything that violates the community guidelines defined by the site owner.

What makes Now4real’s approach interesting is the level of customization and control it gives to site owners. Administrators can define a custom moderation policy in natural language, outlining what content is disallowed (e.g. hate speech, profanity, off-topic content) and even what is allowed (to clarify grey areas or acceptable slang). The LLM-powered AI then enforces this policy automatically: as users submit messages in the chat, the AI reviews the content in real time and blocks messages that appear to break the rules. If a message is blocked, the user is informed that their message was inappropriate according to the policy, creating a feedback loop that educates users on the community standards. All of this happens almost instantaneously, ensuring that problematic messages never actually appear in the live chat stream.

Because it’s powered by an LLM, Now4real’s moderation can understand context to a degree that simple keyword filters never could. For example, the AI considers linguistic patterns, contextual cues, and even the surrounding conversation—such as the flow of previous messages and replies—when evaluating a new message. This means it’s looking not just at individual words, but how they’re used in sentences, the tone of the exchange, and other subtleties learned from vast datasets. The result is a smarter filter – one that can allow harmless banter or slang, but crack down on genuinely toxic behavior. Of course, as noted earlier, no AI is perfect and regularly reviewing and refining the moderation policy can help improve accuracy over time. This highlights an important best practice: even with a powerful LLM moderating content, human administrators should periodically review what the AI is flagging or blocking and adjust the guidelines to reduce mistakes.

Overall, Now4real showcases how an LLM-based moderation system can be applied in practice to foster healthy online interactions. By embedding AI moderation at the core of the chat service, website owners can host lively, real-time discussions without relying on an army of moderators. The AI handles the heavy lifting according to the site’s specific rules, and users benefit from a safer, more responsive community space. Now4real’s use of AI moderation is a peek at the future: as more platforms adopt LLMs to police content, we can expect online communities to be not only more scalable, but also more adaptable to the unique norms of each community – all while hopefully reducing toxic content.

Conclusion

The journey from simple NLP filters to advanced LLMs marks a new era in AI content moderation. Traditional methods like keyword spotting and basic machine learning provided the foundation for automated moderation, but they often fell short in understanding context and scale. Large Language Models have emerged as a novel solution, offering a far more sophisticated grasp of language and meaning. They bring clear benefits in consistency, nuance, and efficiency, enabling platforms to moderate content in ways that were impractical just a few years ago. Companies at the cutting edge – from social media giants to specialized services like Now4real – are already demonstrating how LLM-powered moderation can maintain vibrant yet safe online communities.

That said, embracing LLMs doesn’t mean forgetting the lessons of earlier moderation efforts. It remains vital to define clear policies, involve human expertise for oversight, and continuously refine the AI’s output. AI moderation is not a set-and-forget endeavor; it’s an ongoing process of tuning algorithms and rules to fit human values. When done right, the combination of human judgment and LLM efficiency can dramatically improve the health of online spaces. As AI moderation technology continues to evolve, the hope is that it will lead to more respectful, inclusive, and well-managed digital communities – where free expression is balanced with protections against harm, and where the heavy burden on human moderators is lightened by intelligent automation.

In summary, large language models are proving to be a transformative force in the content moderation landscape. By learning from the shortcomings of the past and leveraging the strengths of these new AI systems, online platforms can better navigate the challenges of scale and nuance. The evolution from traditional NLP to LLM-driven moderation is still ongoing, but it signals a future where AI and humans work hand-in-hand to keep our digital spaces safe and civil. With responsible implementation, LLMs may indeed fulfill their promise as the cornerstone of the next generation of AI moderation tools.

Sources:

-

Weldon, D. “6 types of AI content moderation and how they work.” TechTarget (2025) techtarget.com

-

OpenAI. “Using GPT-4 for content moderation.” OpenAI Blog (2023) openai.com

-

Gao, L. “Content Moderation by LLM: From Accuracy to Legitimacy.” arXiv (2024) arxiv.org

-

Now4real. “AI Moderation – Overview and How it works.” Now4real Documentation now4real.com

January 7, 2026

Originally published: August 27, 2025

Table of Contents

Recent articles

Add Now4real to your site today

Easy. Free. Instant.

Let visitors chat, discover hot pages, and build instant communities—right on your website.